|

|

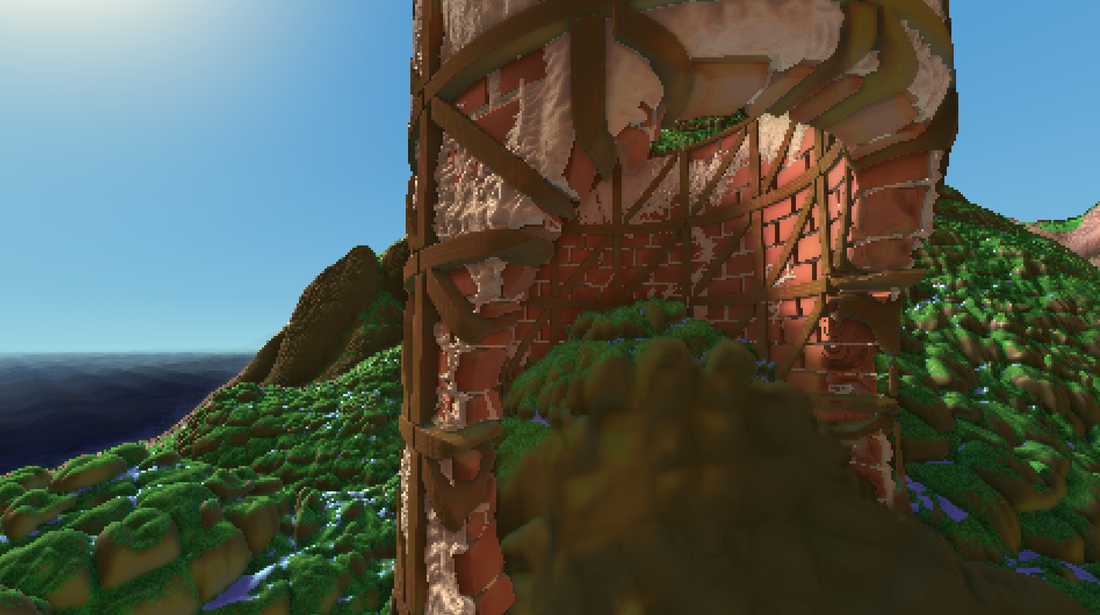

What is the next step for video games?Voxel Quest is (let's be honest!) probably more of a research project than a game. It is an attempt to explore new areas in rendering, procedural generation, simulation, and AI. At this point, it is entirely free and I work on it as a hobby in my spare time.

|

ProceduralEverything in Voxel Quest is generated on-the-fly with math and algorithms. This means that not only is everything small enough to share easily, but that no external tools are required to create new content in the engine. The players are inherently the creators.

|

Volumetric

Most games are literally paper-thin, represented by polygons and complex textures that are difficult to dynamically manipulate. Voxel Quest is fully volumetric - anything can be easily created or destroyed with no prior precalculation.

|

SimulationStatic worlds feel more like movies than video games. Voxel Quest attempts to take advantage of the interactive nature of video games and empower users with real agency. Every action you take means something, and yields emergent results.

|

Press and Accolades

|

Hacker News:

|

Voxel Quest has been viewed by over a million people worldwide, even though it is still in its infancy. Here is what some of them are saying:

"I wanted to give more praise like everyone else, but I just keep going back to watching and I don't really have anything to say Jaw dropped into a bottomless pit" - Menko "Damn dude! Seriously incredible work! Brilliant!" - mattli911 "What you're doing is so beautiful, the terrain is great, the building system looks AMAZING ... holy sh__" - Tactful Dan "[After some time watching it] this was the moment I lost it. Holy sh__ man thats so damn amazing" - Deer Viehch "Holy fark. This is gorgeous!" - N00Bella "Holy mother of procedural butts. This looks better and better with each update." - Flosoph "Started to follow this development recently and I'm impressed. The building is very sweet and everything is very interactive. The perfect base to have a lot of fun!" - Zimnel Redoran "Hi this is absolutely amazing, it is really clever the way you have done this. I had a dream to make a game, and I was planning on doing it with small cubes, like you have. I couldn't help noticing precise, perfect details like when you walked into a weapon on the ground. It really is great. I hope all goes well in the coming year." - repeater64 "Your landscape generations and water/coast shaders are utterly stunning. Gorgeous work!" - Jack Sainsbury "I normally don't comment on dev videos but this hit me in such an amazing way." - NoTimeLikeThePresent "God there are some clever people around... this is absolutely incredible." - CatVader70 "That looks really good, if your a one or two person developer team then that makes it really REALLY impressive." - Linus Levitt "This is damn impressive stuff! I would love to horse around with this. :)" - Dana Diedreich "Wow...that looks amazing. Hopefully someday I'll have the time/dedication to get to that level of a voxel engine. Very nice work!" - Daniel Gleason "Gorgeous in many ways. Keep going strong, the money will follow quality I am sure" - Terry Wolfe "I can't begin to imagine all the kinds of games that could benefit from an engine like this. An RTS using this would be sweet. I hope the future of games is more like this and less like the current AAA FPS/Action game monotony. Just pure creativity and skilled programming." - Aza Industries "Welp, can't wait to waste my life on this when it's completed." - Aaron Cohen "Whooooooaaaaaa! I had only seen you doodle around with random rocky ground, nothing even resembling a normal world! This is super impressive and my mind is blown! It looks crazy nice! Looking forward to the future developments. That everything is volumetric is just insane. Super nice :D" - Andreas Aronsson "Holy sh__ this looks amazing. How is the data stored? It looks amazing when the layers builds up like that, it really makes the game (well..) looks solid. Like stuff are actually there." - prof89 "I arrived expecting another bad minecraft clone. I left with a great deal of hope." - IndustrialBonecraft "This looks really performance intensive but really really REALLY cool. The water looks realtime and the lighting looks amazing; nighttime looks really nice in this. Moddable? I hope someone makes an Xcom total conversion because this looks absolutely kick-ass." Xerxes "What made you so smart. Did you get bitten by a radioactive stephen hawking or something. But seriously nice work. I love watching these videos of your tech! :)" - DeadlyApples666 "Woooaahh! Never thought you could achieve such render distance with your engine! It really is a dramatic change from your prevous videos. My god, this is gonna be so awesome! This engine is the percect match of performance and visuals, love it!" - JohnyK07 "This is bloody amazing! Especially compared what it was before; there's something just simply mind-boggling about the whole thing..." - Dagothig "This looks really amazing. I have screwed around a lot with customized terrain generation in Minecraft a lot, but I was always disappointed to see the limited height and render distance...but this...that spectacular view from the top of the mountain, that's some serious stuff." - OhNooez "Wow, Gavan! I have not looked at your project in months, and I am completely blown away! This is looking really amazing! The level of detail, the view distance, the biomes all coming together... That freedom of camera movement. The sheer scope of this project is going to change video games in my opinion. Great work, looking forward to more!" - Trevor Chritensen "It looks sooooooooo good. :D The distances in the game now are mind-boggling." - Talvieno "I really like it's style. It reminds me of the ninties, a time where bad camera perspectives were never an issue. I wanna support this wtih spreading on forums I know." - krux02 "This is insane, I hope this is new gen of gaming" - Berk Can "Dude you have skill! This looks awesome :) i hope to play with it someday." - Nova "Looking awesome mate :D" - Benjamin Porter "This is so cool! You're doing such great work." - flintsteel7 "Looks great! I am a backer. Good luck!" - @RichardGarriott "Voxel Quest, developed by @gavanw, is the 2nd project this year that looks promising enough for me to put some $ in." - SeargeDP Voxel Quest by @gavanw kickstarter: Super hard-core procgen voxel engine and sandbox rpg." - @lexaloffle "Very excited to see where this gem goes!" - @proceduralguy "After having watched the rest of the Voxel Quest KS video, I backed it HARDER" - @Data01 "I just backed Voxel Quest on @Kickstarter and you should too." - @KirkLGilmore "Voxel Quest is a really promising looking game / voxel engine, check it out" - @n_lap "Check out Voxel Quest! you will not regret. ;)" - @malkvi86 "This looks incredibly awesome. I can only imagine the #gamedev implications!" - @StartUp_Studios "I found a project that I am really excited about #VoxelQuest" - @SeamusFD "This is the most interesting game engine I've seen in ages. Only just noticed it was on ks. Back it now. Now." - @docjaq "Procedural, AI-driven, infinite, dynamic, story-focused...and effing beautiful" - @NDoomsday82 "Voxel Quest is something really special and different. Check out why." - @petersdreamgame "Voxel Quest features an amazing voxel engine and procedural generation, hats off to @gavanw" - @Lewpwen "Voxel Quest by Gavan Woolery - you guys NEED to check this out. He's been working hard on it and wont disapoint you!" - @y2bcrazy "Just backed Voxel Quest - help @gavanw cause the thing is sick" - @ondrejsynacek "A worthy kickstarter game. I mean IT LOOKS AWESOME worthy: Voxel Quest by Gavan Woolery" - @gaarlicbread "Voxel Quest by Gavan Woolery is, IMO, the most important #gamedev campaign ever" - ChiefMigizi "I haven't seen a game engine this jaw dropping since the Infinity Tech Demo in 2010" - @ludomation "Holy crap Voxel Quest looks awesome" - @drhayes "You, my friend, are an inspiration to computer scientists everywhere. High hopes for this!" - Soga "Looks like a fantastic engine." - obfuse "There's some incredibly impressive tech, very excited to see where you go from here." - Noogy "This is amazing! You have my vote and purchase when this innevitably gets greenlit." - Lik "...I upvoted this because your video was, frankly, jaw dropping. I code myself (even written some procedural landscape generation programs in the past) and this leaves me very, very impressed." - Ratty "Such a pretty game, a brilliant concept, and I've got to give you credit for the ammount of coding hours that went into this game. Good on you!" - Co "The engine looks impressive! Grats for the hard work. I'm eager to see gameplay demos and I really love that music :D" - hed "The work you have done here is amazing. You should be proud of the giant leap forward yo have made in procedural generation." - WaKKO151 "This looks beautiful. If you can deliver what you've described, I think it will be an amazing game!" - Setablaze777 "Crazy ambitious. I love it! :) For being "just a tech demo", I can see there's tremendous potential. What I'm really interested about is your AI and the emergent storytelling it can create. Hoping to see more from you soon ! :)" -catmorbid "I'm impressed" - Zui "Finally a voxel game that looks good, you have my vote." - jorlin "So beautiful, that I fell down from my chair crying Really, awesome project." -FaNaT "Omg, I HAVE TO get my fingers on that game. Can't wait to play it." - schrottinator "Wow I love the look of this game cant wait for it to come out! Keep up the good work :D" - TwilightTenshi "This seems really amazing and I'm really looking forward to seeing where this goes." - 30-50 feral pyros "Agreed, this is looking ace. Looking forward to seeing where this game goes." - tony.giov "Actually, I'm very intrigued and can't wait to see what you're planning to do with that. The world generation seems absolutely flawless as of now. If you, sir, can deliver any other functions as flawless as this, which I believe you can pretty darn well, you have my vote and my recommendation from everyone I actually know." - Albel11 "While granted this is just a look at the game engine I can see amazing potential for this game. I love how it renders the building and environment on nightfall. It sounds like you have a very ambitious AI. Will be following to see where this one ends up." - gryklon "Could possibly end up as the greatest game i may ever experience, and if any sort of multiplayer does make it then ill have to buy a copy for my friend so we can rule the voxel world!! or brutally die trying....." - キツニャ "Pretty darn amazing so far, keep it up." - Syron "Just from the base engine that is shown in the video, I can tell that the author has really put a lot of dedication into this project. I'm excited to see where it goes in the future!" - Resistor Twister "Very ambitious project, I'm really looking forward to see it's outcome. Best of of luck for you!" - Chimera "This Game is AMAZING" - Waax "This looks very nice and interesting. Great job so far :)" - Saphira "Looks stunning! Loved the look and feel of Outcast when it was released, so I find this very interesting. With all that hidden detail you're going to want to be blowing big chunks out of stuff in-game just to show it all off! ;) Voxels get my vote." - Cupio Minimus "Nice initiative, and great job (Really you did that alone? :o) Good job" - Kratocelot " I'm really looking forward for this game. And congratulations! amazing one man job!" - Chanchoman "That's a damn cool engine!" - HayabusaJames "This a great project! Can't wait to see more. I am working on a story/game design concept close to what you are doing right now. It would be interesting to see what can be done in the future with this kind of voxel engine." - Knightlord "Wow the visuals are so fresh! Can't wait until we can get our hands on it!" - The Chezzi "This looks genuinley unique! Absolutely love the aesthetic style and shading engine. The way the world renders is also visually appealing. Can't wait to see how this goes!" - Hyperchaotix "This looks really impresive even in these early development stages. Keep up the good work, I can't wait to see where this ends up going." - John11888 "Looking unbelieveble awesome, even at this early stage! Definitly planning to buy it even if game will suck (but I hope it wont), :^) just to support development of engine." - gerr_kiddosse "Honestly, I would buy your game if I could just randomly create and destroy your awesomely styled scenery. You have my vote for hopes that you can finish this with success!" - CeleriX "It looks and sounds absolutely gorgeous and engrossing! Particularly the AI, I mean, a 'full emotional profile and temperament' - Peter Molyneux, eat your heart out! A day-one purchase, methinks. :D" - Mercy "I'm sure it's been mention dozens of times already, but graphically this reminds me of Ubisoft's Little Big Adventure from way back when. Can't wait to see what you do with this cute engine." - ry "I'm looking forward to playing this game! Thanks for your hard work XD" - Mint "Thanks again, * Does goofy nerdy happy dance! will be watching this development for sure!" - TZODnmr2K5 |